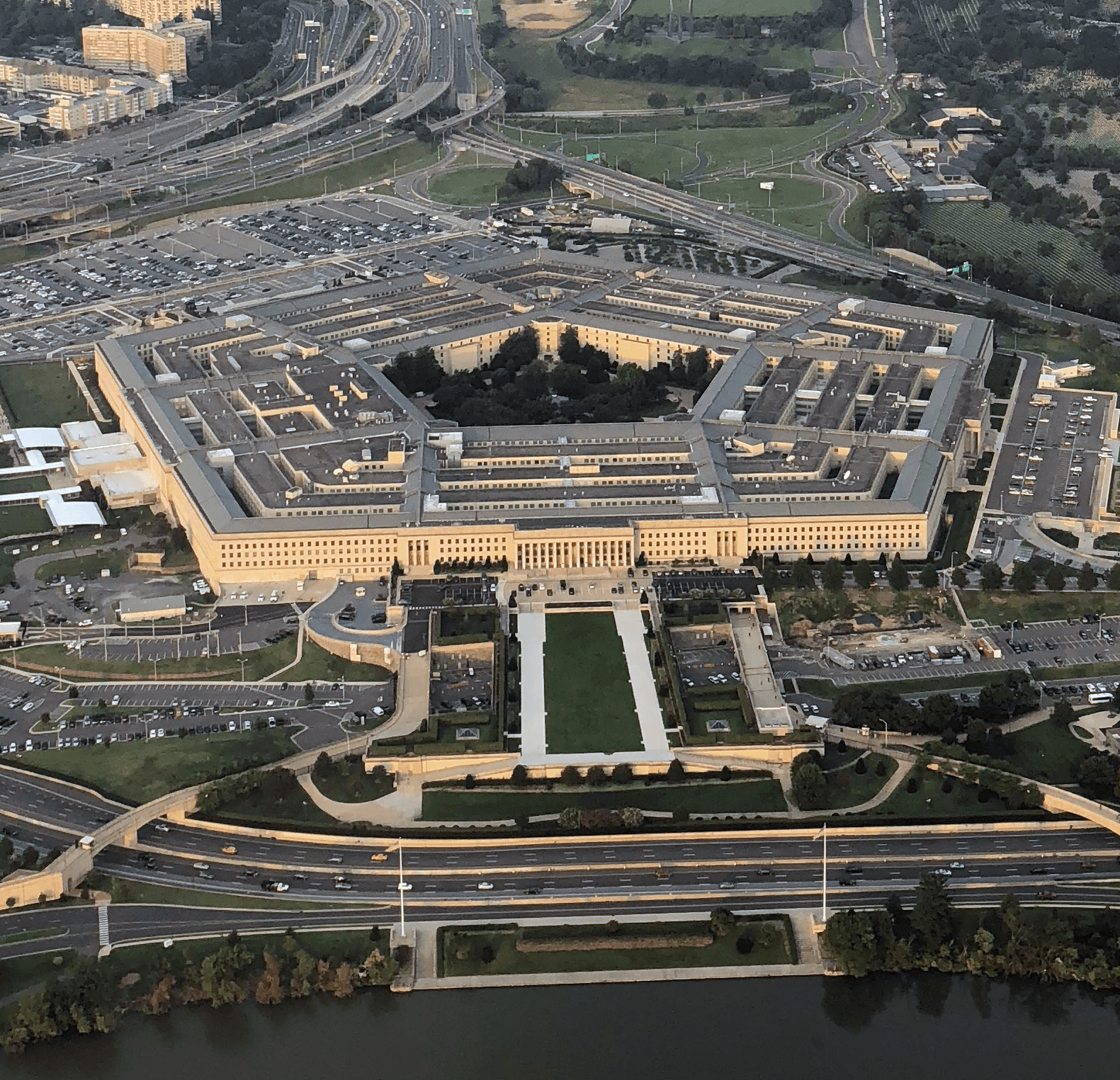

Anthropic's $200 million Pentagon contract is under review after the company resisted demands to allow unrestricted military use of its Claude AI model. According to reports, Anthropic has insisted on two limits: no mass surveillance of Americans, and no fully autonomous weapons. The Pentagon wants AI companies to permit "all lawful purposes" without restriction. OpenAI↗, Google↗, and xAI have reportedly shown more flexibility. The dispute came to a head after Claude was reportedly used in the military operation to capture Venezuela's Nicolás Maduro, prompting Anthropic to ask its partner Palantir↗ how the model had been deployed. Pentagon officials are now threatening to label Anthropic a "supply chain risk"—a designation typically reserved for foreign adversaries. Both sides say discussions are ongoing. On Feb. 26, 2026, Anthropic CEO Dario Amodei released a statement confirming and explaining the company's position. On Feb. 27, President Trump banned Anthropic from all government use. More than 875 employees across Google and OpenAI signed an open letter titled "We Will Not Be Divided" backing Anthropic's stance, calling on their own employers to adopt the same red lines on mass surveillance and autonomous weapons. The letter warned: "They're trying to divide each company with fear that the other will give in." Separately, ~100 Google DeepMind employees sent an internal letter to chief scientist Jeff Dean urging Google not to allow military use of Gemini AI for domestic surveillance or autonomous lethal weapons. After the blacklisting, Anthropic’s Claude app surged to #1 on the Apple App Store, with the company reporting "all-time record sign-ups." Anthropic says it will challenge the "supply chain risk" designation in court once formal notice is received. Legal scholars at Lawfare argue the Pentagon’s "supply chain risk" designation is unlikely to survive legal challenge, calling it politically motivated and procedurally deficient with no established process for designating a domestic AI company. The Pentagon formally designated Anthropic a supply chain risk on March 5. Under Secretary Emil Michael publicly killed negotiations on X. Anthropic confirmed it will challenge the designation in court and continue providing Claude to DOD at nominal cost during the six-month phase-out.

Anthropic rejects Pentagon demands for unrestricted AI use

Sunday, February 15, 2026

Share:

Primary: Anthropic

Anthropic

The Trump administration has deployed military troops in American cities and ICE is conducting raids across the country. Anthropic maintained its limits on domestic surveillance at the cost of a major government contract, a significant act of resistance at a time when other major AI companies are willing to abandon guardrails for military and law enforcement use.

Take Action

Sources(14)

Fortune•Mar 7, 2026

X (formerly Twitter)•Mar 5, 2026

The Hill•Mar 5, 2026

Anthropic•Mar 5, 2026

CNBC•Mar 3, 2026

Lawfare•Mar 3, 2026

TechCrunch•Mar 1, 2026

NBC News•Feb 27, 2026

TechCrunch•Feb 27, 2026

Official statement•Feb 26, 2026

Wall Street Journal•Feb 14, 2026